Unified test automation powered by AI, cloud, and low-code

AI-POWERED TEST AUTOMATION

mabl harnesses multiple AI technologies including generative AI to extend test coverage, improve reliability, and reduce maintenance. We're committed to delivering innovations and honored to be recognized by Gartner as a leader in AI-powered software testing.

LOW-CODE FIRST

In our mission to democratize testing, mabl provides the best low-code experience to non-technical and business users, while also supporting the full code flexibility that developers crave to customize, extend, and create shared libraries.

BORN AND RAISED IN THE CLOUD

mabl is a modern, secure, cloud-native platform designed for scalability, delivering an unrivaled holistic approach to software quality and promoting efficiency and long-term maintainability.

“Mabl easily integrates with our workflows and CI/CD pipelines so that developers can run really robust tests without using a lot of engineering resources.”

Read the Story

"We went from 10% to 95% test automation coverage with 3 QAs and 5 developers. Our team is working with greater confidence and our customers are even happier."

Read the Story"Mabl allows our team to focus on improving our product and user experience. The fast, consistent execution has been instrumental in showing the value of testing."

Read the Story"Using traditional automation tools like Selenium would have taken 2 years and $240K to accomplish what ITS did with mabl in just 4 months at 80% cost savings."

Read the Story"Mabl lets us accomplish in hours what we used to do in 2 weeks. Our team is increasing velocity with higher quality and providing more value to the business."

Read the Story“My team started using mabl effectively in 6 weeks versus months with a traditional tool. They can easily maintain tests and our output has doubled as a result.”

Read the Story“It’s about using automation to let people do their best work. Mabl helps more of our people play an active role in testing and think about higher value things.”

Read the Story“With mabl, my team can scale testing when we need it most. We can run as many tests as needed in parallel without worrying about managing infrastructure.”

Read the StoryQuickly deliver new innovations with confidence to outpace your industry

Deliver world-class digital experiences that delight your customers

Achieve higher ROI on strategic Digital and DevOps investments

HOW MABL TRANSFORMS TEST AUTOMATION:

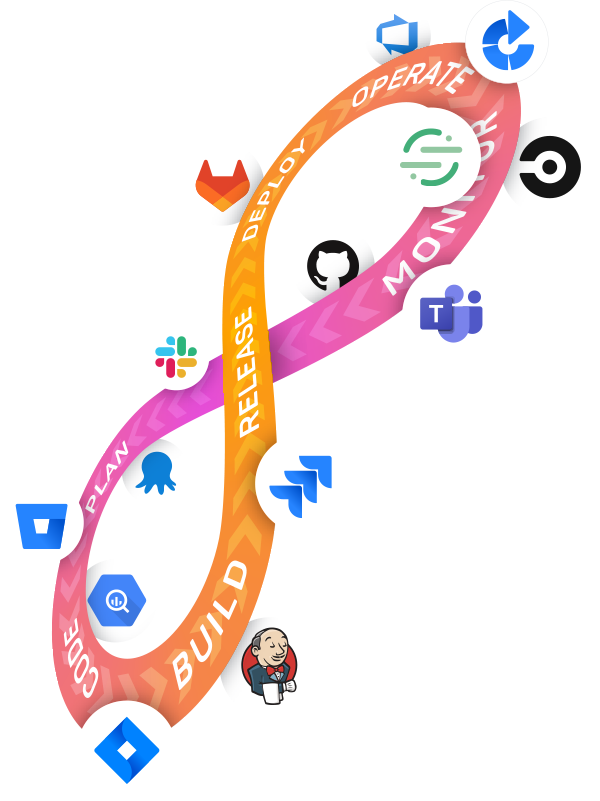

Build 90%+ end-to-end test coverage across functional and non-functional quality with low-code and code flexibility.

Reduce test maintenance and improve reliability to confidently scale testing across browsers, devices and real world scenarios.

Shift testing left to find and fix defects faster, and connect mabl to team collaboration tools for a shared understanding of quality.

Global security leader Barracuda relies on mabl to test their data protection products before deploying on a global scale.

Read how Senior Software Engineer Antony Robertson built - and broke - a custom framework in the quest to automate testing in CI/CD pipelines at Priceline.

Mabl’s Testing in DevOps Report surveyed 500+ development and QA leaders on the impact of automated testing tools, AI, and software testing on DevOps.

In our 4th Annual State of Testing in DevOps Report, 560 software development and QA professionals shared the status of their DevOps adoption journey, and how testing activities impact adoption, collaboration, and customer happiness.

Mabl is the leading unified test automation platform built on cloud, AI and low-code innovations that delivers a modern approach ensuring the highest quality software across the entire user journey. Our SaaS platform allows teams to scale functional and non-functional testing across web apps, mobile apps, APIs, performance and accessibility for best-in-class digital experiences.