Library

Empower your team to build and release innovative software faster with AI-driven automation you can trust.

The 2024 State of Testing in DevOps Report

Explore the 2024 State of Testing in DevOps Report to uncover key insights on DevOps, testing, and the role of QA in collaboration & customer satisfaction.

The 2022 State of Testing in DevOps Report

Our 2022 State of Testing in DevOps Report shows how software development and QA professionals shared view their DevOps adoption journey.

DZone Refcard - Cloud-Based Automated Testing Essentials

In this Refcard, we will explore the basis of automated testing and delve into the details of how you can leverage mabl’s cloud-based automated testing solutions to dramatically improve your test suites and reduce the burden on both developers and testers.

The Era of Quality Engineering

We reviewed our survey of 600 industry professionals about their progress towards embracing DevOps, and explored the trends driving quality engineering.

Test Automation Buyer's Guide

While manual testing and automation are not new, historically these approaches have not enabled teams to maintain sufficient test coverage while contributing to product velocity.

Harness the Power of Low-code & Intelligence in Test Automation

We all know the countless benefits of automating workflows in the software development pipeline. Unfortunately, all automation solutions are not created equal - especially when it comes to test automation.

2022 Testing Trends to Watch: The Journey to Quality Engineering

Trends such as the migration to the Cloud, transition to DevOps, and growing customer expectations put a spotlight on QA teams. To continue delivering quality software quickly, we as an industry need to adjust our approach to testing by practicing quality engineering. But, how do we get there?

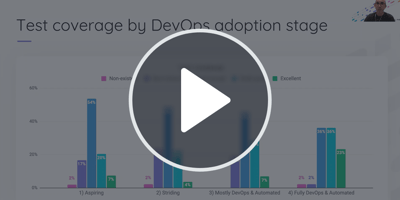

State of Testing in DevOps: Reflecting on an Industry Transformation

DevOps adoption has doubled over the past five years, spurred by the need to accelerate product velocity and pushed into hyperdrive by the pandemic and shift to digital-first.

State of Testing in DevOps 2021 Survey Report | mabl

Our annual report aims to share what over 600 respondents revealed about adoption of CI/CD, DevOps maturity, and the impact of test automation on business.

How to Plan and Execute Integrated End-to-End Tests

End-to-end testing (E2E) isn’t just another test to automate. Instead, it’s a method to test your product in ways that approximate real-world use cases from the perspective of your users and customers.

Guide to Testing in DevOps Pipelines

To reach the full potential of DevOps, teams need to consider software testing an integral part of the development process.

DevTestOps Landscape Report

Get this State of DevOps 2019 report to find out how the rise of DevOps and Continuous Delivery has affected testing and how much testing is being automated.