Your automation testing strategy

If your team struggles with automation testing, don’t feel alone or ashamed. I meet lots of people whose teams do all their regression testing manually. Other teams have automation but are finding it expensive to maintain and aren’t sure if the tests are providing a benefit.

Teams who lack automated regression tests are incapable of delivering value to their customers frequently. Changing the code takes longer and longer, because there’s more and more manual regression testing needed to feel confident about releasing.

Where to start

Get a diverse group of delivery team members together to talk about what’s holding you back and whether automation testing might help with that roadblock. Think about the trade-offs between automating and doing testing manually. Both have a cost.

What’s the biggest roadblock in your way? When I ask this question, I often hear these answers: time, skills, budget for tools and test environments, and legacy code design that makes automating tests below the UI level difficult. Your biggest automation pain point may not even be the tests themselves. If, for example, your team doesn’t yet have continuous integration and wants to get into automation testing, I’d recommend you stop reading this and come back once that is set up.

As you review what your team wants to automate, evaluate your product’s risks along with the cost and benefits of automating a particular area of testing. Are you getting fast feedback as you introduce changes in the product? How long does it take to hot fix a production bug? You’ll want to set a small, achievable goal to start with, and find ways you can measure progress toward that goal.

In my experience, teams get the most long-term benefit from automating unit-level tests, especially if you’re practicing test-driven development so that the tests help you achieve solid code design. Tests at higher levels can be more expensive to automate, but there may be compelling reasons to start at those other levels. Let’s look at some models that can help your team discuss these trade-offs.

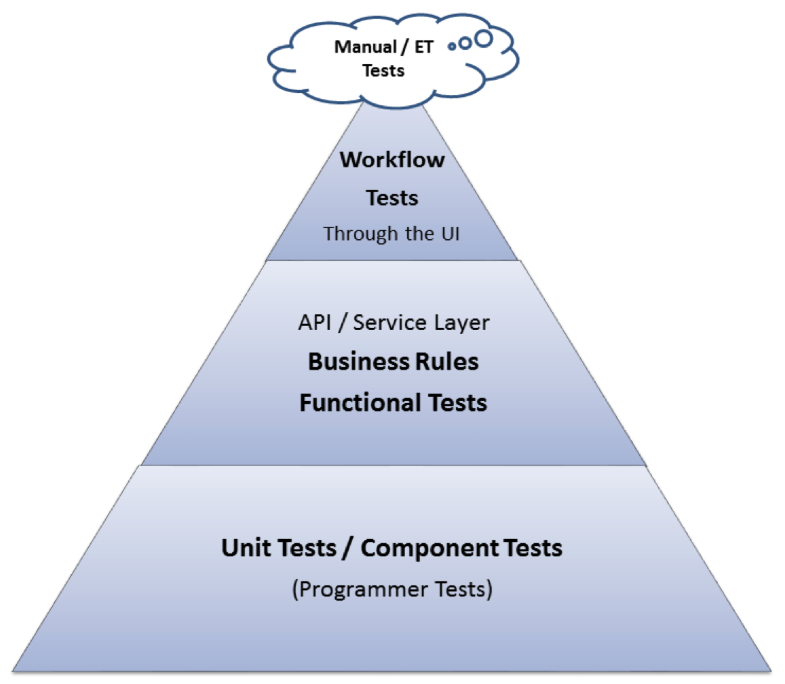

The test automation pyramid

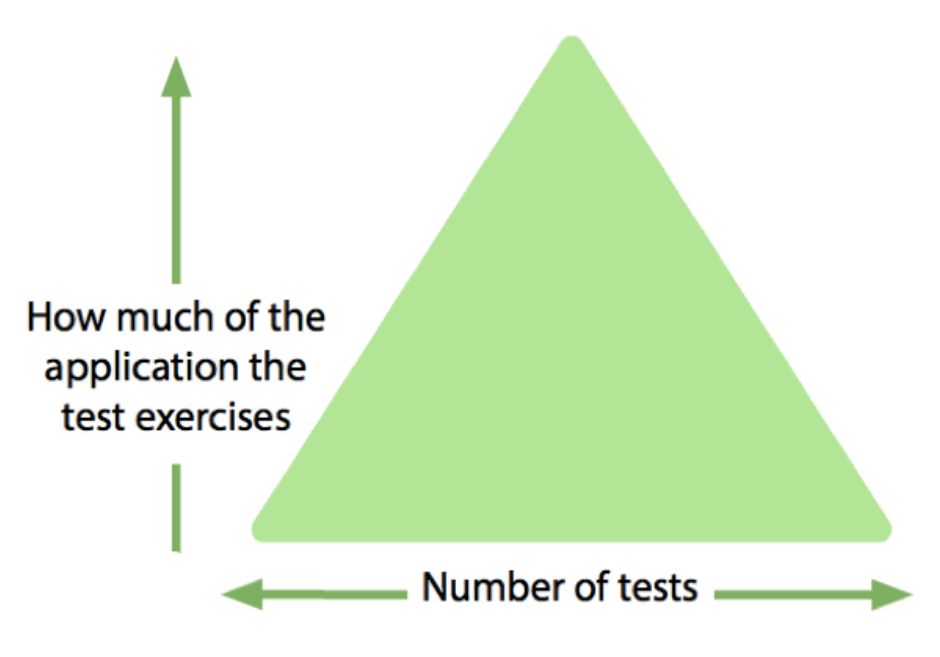

Over the years, my teams have found Mike Cohn’s “Test Automation Pyramid” a useful model to help us formulate our automation strategy. Yes, it is not actually a pyramid, it’s a triangle, but what’s important is that it helps you visualize the steps to take as you tackle automation testing.

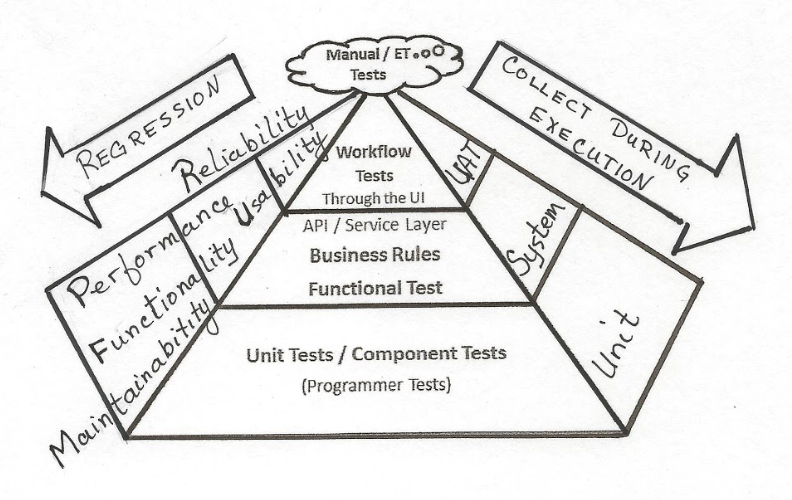

The pyramid shows that in general, you should try to push tests down to the lowest level possible. Unit tests are quick to write (once the team learns how), assure internal code correctness, and can be run in minutes before committing new code changes. These tests provide the solid foundation to your automation testing, but they generally can’t test everything.

We also need to check functionality and other quality characteristics such as accessibility and security across larger pieces of the application with functional testing. For example, does an API endpoint return the correct values? These tests may take more time to create and, if they access databases and other services, provide slower feedback. If business stakeholders need to collaborate on acceptance tests to verify business rules, these middle layer tests can be written in plain language that everyone can understand.

Products that have a user interface (UI) generally need to be tested across all these layers. The feedback loop from UI level tests is longer, but they can find the most important regression failures. Today’s technology makes it easier to automate robust tests at the UI level, but many factors can still make these tests “flaky” and more costly to maintain. That’s why the model has the fewest tests at the top level.

The little cloud at the top of the pyramid represents testing that’s done manually. Even with solid automation at all layers of the triangle, your product might require a huge cumulonimbus cloud that includes exploratory testing, accessibility testing, security testing and many other critical testing activities. You might be able to use automation to speed up this manual testing.

Your app may require a different-shaped model. For example, a web-based product with lots of business logic in the UI may require more regression testing at that level. Also, there are newer, alternative approaches to functional testing that don’t require testing through the UI, such as subcutaneous testing, that you can investigate.

The test automation triangle, simplified

There are many variations on the pyramid model, so take time to check those out and see if they work better for your team. Your context is unique, so experiment to find what helps you map out your first steps to automation testing success. I like the way that Seb Rose maps out the concept behind the pyramid. You want as many tests as possible that test smaller chunks of the application, but you still need to check the “big picture”.

An actual pyramid!

In Chapter 15 of More Agile Testing, Sharon Robson explains a four-step process she uses to talk about automation testing. She starts with the basic pyramid, but adds on the tools that can be used to execute the tests, the types of tests or system attributes to be tested, and finally the automated regression tests needed.

A DevOps model

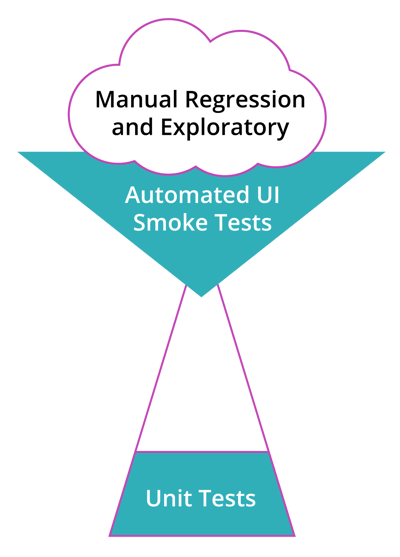

In her Practical Guide to Testing in DevOps (p. 130), Katrina Clokie inverts the triangle model to use the different layers of tests as the “DevOps bug filter”. The unit level filter finds the tiny unit-level bugs. The integration layer catches bigger bugs that extend between different layers of the app. End to end tests catch the more complicated bugs. Then she adds on the information captured in production that can help detect bugs and evaluate product quality: alerting, monitoring, and logging.

If none of these models sings to your team, check out the links in the reference section at the end of this post. And if none of those help you get started formulating your strategy, grab some markers and a whiteboard (physical or virtual) and start drawing together to visualize what levels of your application need automation testing, and where you want to start.

Starting your first experiment

Your whole delivery team needs to agree on a starting strategy for automation. Automation testing can be scary and difficult, so you need to be willing to stick to it during the painful parts.

You may decide on a strategy that involves more than one layer of the triangle. I worked on a team that committed to learning how to do test-driven development, knowing the process was going to take many months. We shared the burden of manual regression testing through the UI at first, and had no automated tests at all, just a cloud of manual regression tests:

We decided to automate UI smoke tests for the critical areas of the application. After eight months, we no longer had to do manual regression testing. By then, we also had a small, growing library of automated unit tests. We still did a lot of manual testing on new features and at the services layer of our application. We had an upside-down sort of triangle that is typical of teams trying to succeed with automation testing:

We still had a lot of holes to fill, but since we didn’t spend a day or two every sprint doing manual regression testing, we had time to tackle other automation challenges. Eventually, our test automation triangle looked like the classic “pyramid” above.

Successful automation testing requires a big investment over time. Start small. A good way to get started with automating unit tests is to write them for all bug fixes - or even better, test-drive them. You may decide to start automating API tests by doing them for all new stories or features going forward. Or, if manual regression testing is sucking up all your time, take advantage of tools that let you quickly get up to speed on UI test automation and create some robust UI tests that cover the most critical areas, so you can devote more time to expanding your automation efforts to other levels. For sustainable automation testing that lets you release frequently and confidently, your team will need to invest time in learning good automation principles and patterns.

What if your first experiment fails? Maybe you automate some tests and then find they are unreliable or impossible to maintain. Awesome - you have learned a lot, and that will help you going forward! Don’t be afraid to scrap ineffective tests or a tool that isn’t working for you and start over. Don’t fall victim to the “sunk cost fallacy”. As Seb Rose has said, “if a test doesn’t help you learn something about the system, then delete it!”

References and more reading:

Seb Rose, “http://claysnow.co.uk/architectural-alignment-and-test-induced-design-damage-fallacy/

Katrina Clokie, A Practical Guide to Testing in DevOps

Lisa Crispin and Janet Gregory, More Agile Testing: Learning Journeys for the Whole Team

Noah Sussman, “Abandoning the Pyramid of Testing in favor of a Band-Pass Filter model of risk management”

Alister Scott, “Testing Pyramids & Ice-Cream Cones”

![How We Built a System for AI Agents to Ship Real Code Across 75+ Repos [Part 2 of 2]](https://www.mabl.com/hs-fs/hubfs/Geoffs%20Blog%20Featured%20Image%202.png?width=400&height=200&name=Geoffs%20Blog%20Featured%20Image%202.png)

.png?width=400&height=200&name=Launch%20Blog%20Featured%20Image%20(1).png)

![How We Built a System for AI Agents to Ship Real Code Across 75+ Repos [Part 1 of 2]](https://www.mabl.com/hs-fs/hubfs/Geoffs%20Blog%20Featured%20Image%201.png?width=400&height=200&name=Geoffs%20Blog%20Featured%20Image%201.png)