The Outer Loop Problem

AI coding agents are shipping code faster than teams can test it. The gap isn't closing. It's widening.

Two Speeds of Software Delivery

Software delivery has always had two loops. The inner loop is where code happens: commits, pull requests, AI suggestions, unit tests. It is fast, developer-owned, and increasingly automated.

The outer loop is where software gets validated as a system: integration tests, end-to-end user journeys, regression coverage. It's slower by nature, which means it’s harder to keep current

For years, this gap has been the status quo. When AI coding agents arrived, however, the inner loop got orders of magnitude faster. The outer loop hasn’t been able to keep up.

Inner Loop

Developer and AI-assisted

→ AI suggests, writes, and iterates code

→ PRs open in minutes, not hours

→ 50+ commits per day on active teams

→ Unit tests run in CI automatically

Outer Loop

Still mostly human-dependent

→ Integration tests written manually

→ Flaky tests slow pipeline confidence

→ Maintenance backlog grows every sprint

→ User journeys validated inconsistently

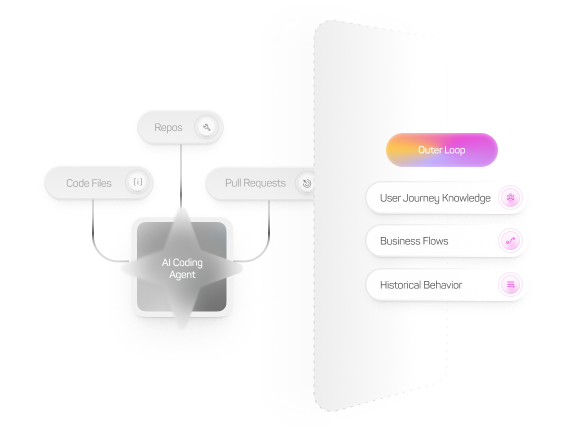

AI Coding Agents Weren't Built for the Outer Loop

AI coding agents excel in the inner loop. They can read your codebase, suggest changes, and write unit tests for individual functions. The problem is that they work within the code context of a single PR.

These agents don't build upon the knowledge of how your application behaves as a system. The user journeys that span multiple teams' work, the historical failure patterns, and the business-critical flows are just out of reach, living in the outer loop, and it's a fundamentally different problem.

As AI agents accelerate the pace of change, the coverage that would catch the resulting bugs is under-maintained and increasingly behind.

What the Outer Loop Needs

Writing more Playwright tests or hiring more QA engineers addresses the symptom, not the architecture responsible for the problem. The pace of code generation has permanently outrun the pace of human-authored test maintenance.

What the outer loop needs is something architecturally different: a system that accumulates knowledge of your application over time, one that understands your user journeys well enough to validate them continuously and to recover automatically when the app changes.

The Stakes

When outer loop coverage breaks down, bugs reach production. This doesn’t happen occasionally; it’s systematic. Production incidents stop being surprises and start being the predictable output of a process where code ships faster than it can be validated.

The outer loop isn't a QA problem. It's an engineering infrastructure problem. And it needs an infrastructure-grade answer.

See How mabl Addresses This

Active Coverage is how mabl closes the outer loop gap: continuously, automatically, at the pace your team ships.